In May and June are concentrated the main public events of IT companies. Some companies like Apple focus on product updates of their devices (operating system and apps); others, like Microsoft and Amazon, present the news about their cloud services (Azure and AWS). Google, in the end, does both: it shows the news on Android and new cloud services. All the events of this year have given wide prominence to AI and Machine Learning: there is stiff competition, and all companies have shown fantastic results in different fields, such as image analysis, text understanding, or Natural Language Processing (Salvatore Merone talks about this last in his article). So, let’s take a look at what has been presented in recent weeks at these events.

Google/ IO 2021

LaMDA (Language Model for Dialog Applications)

Natural Language Processing benefited enormously from Google research. LaMDA is the new model based on Transformer, a neural network, which Google Research made open source in 2017. It is a dialogue engine that can lead a free conversation on many topics, showing the ability to follow its interlocutor in a completely natural way, unlike its predecessor.

In the demo showed at the event, LaMDA hold a conversation acting the part of the Pluton planet and then a paper airplane.

Google Maps

Two innovation has been introduced in the historical Google product, both based on AI: a more efficient path computation from a fuel consumption point of view and the Safer Routing. This last feature will allow you to follow the safest route to arrive at a destination based on weather and traffic conditions.

Detection of dermatological problems

After contributing to breast cancer research and diabetic retinopathy, Google has presented a new tool that can detect dermatological problems from a photo taken with a smartphone.

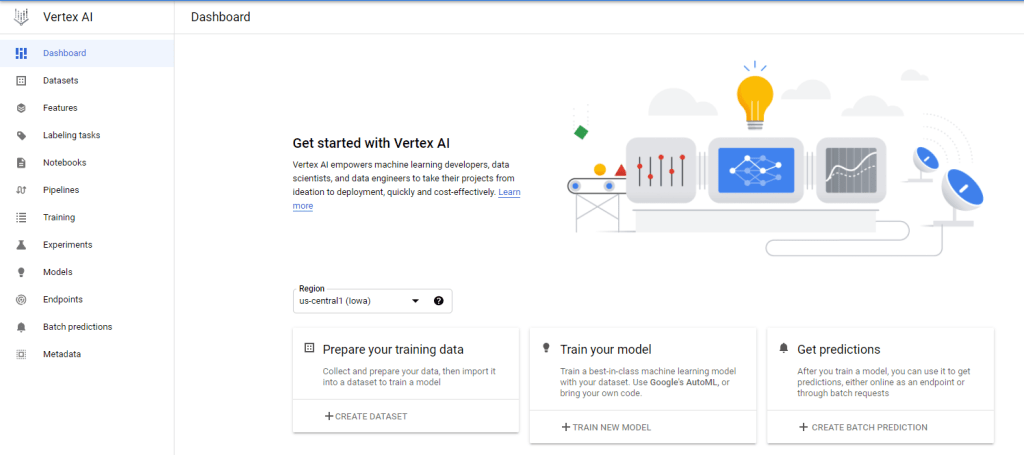

Vertex AI: the new AI cloud platform

Vertex AI is the new machine learning cloud platform by Google, combining AutoML and AI Platform into a new API, client library, and user interface. The aim is to allow you to create and deploy ML models faster. Advanced coding tools that reduce the amount of the written code by 80% have also been introduced.

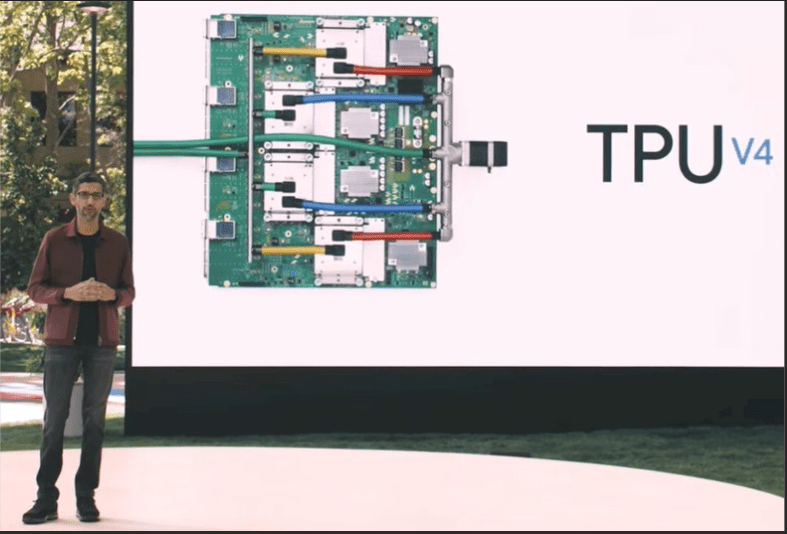

New generation of TPU

Google introduced 4th generation tensor processing units (TPU). A TPU is a microprocessors class designed to provide hardware acceleration for neural networks, image classifiers, natural language processing, and translation models. TPUv4 clusters, also called pods, are made up of 4096 interconnected chips, able to provide more than an exaflop of computing power, equivalent to 10 million laptops of medium power. TPUv4 will soon be available in the Google cloud data centers.

MUM: the new generation of AI algorithms

MUM (Multitask Unified Model) is a new AI algorithm, 1000 times more powerful than its predecessors based on the Transformer architecture. It will make the search engine more efficient: we will ask questions in a more specific way, receiving more detailed answer. Moreover, MUM is not only able to understand the language but is also able to generate it in 75 different languages.

Tensorflow Lite

TensorFlow is an end-to-end open-source platform for machine learning. It has a comprehensive and flexible ecosystem of tools, libraries, and resources from the community, enabling the researchers to push state of the art in ML and enabling developers to create and deploy ML-based applications faster. The Lite version allows the execution of ML models on mobile devices, Android, iOS, and IoT. But the most important news is that the same model generated for mobile devices can now be executed in browsers for image classification, object detection, and other tasks, thanks to the WebAssembly standard.

Microsoft Build 2021

On May 25th at 6:00 PM CEST, Microsoft CEO Satya Nadella kicked off Build 2021, the annual developer conference, with a virtual keynote. He outlined the Microsoft strategy to help developers create the future. You can view it at this link. Prominence has been given to solutions for developers that support collaboration and productivity: for example, the new integration for the Power Platform and Visual Studio, the latest investments in Java, and enterprise-grade application in the Azure cloud. The new Teams features to improve the hybrid work have been shown: the possibility of working anywhere, anytime, on any device. Talking about AI, Microsoft was trying to resolve consumer confusion over artificial intelligence services by introducing Azure Applied AI Services.

Azure Applied AI services

The basic idea is to bring AI solutions into production in days instead of months, generating value quickly with specialized services in the most common business processes. Models were added to the existing Cognitive Services to solve specific problems, then allowing extending them with the Azure Machine Learning service.

The complete list of the service offered is as follows:

Azure Bot Service: to create bots and connect them to communication channels. We are therefore in the macro area of conversations.

Azure Form Recognizer: transform documents into useful data in no time. The macro area is that of documents.

Azure Cognitive Search: bring cloud search service to web and mobile applications. The macro area is that of search.

Azure Video Analyzer: extract useful information from your videos. The macro area is that of video.

Azure Immersive Reader: helps readers to read and understand a text. The macro area is that of accessibility.

Azure Metric Advisor: proactive monitor data from every possible data source to diagnose and prevent problems. We are in the area of monitoring.

PyTorch Enterprise on Azure

PyTorch is a successful open-source deep learning framework used by Microsoft for Bing and Azure Cognitive Services products. With the collaboration of Facebook, they announced a new initiative called PyTorch Enterprise Support Program, to allow the service provider to offer enterprise verticalization for its customers. For this purpose, Microsoft has launched PyTorch Enterprise su Azure to integrate PyTorch with solutions published on Azure.

Power Apps

Microsoft has announced the latest updates to its open-source low code language Power Fx, which allows anyone to create apps using natural language by leveraging GPT-3, the powerful natural language model developed by OpenAI, that will be integrated into Power Apps, the Microsoft low code development platform.

Apple WWDC 2021

In the virtual keynote of WWDC, held on June 7th, much news was announced about the operating systems running on iPhone, iPad, and MacBook. The term “On-Device intelligence” has often been used to indicate that much of the AI operations will be carried out locally on the devices thanks to the Apple Neural Engine. To protect users’ privacy, the Siri assistant will process locally as much data as possible (even if they don’t specify the percentage). For example, the audio to be analyzed in speech recognition will never leave the device, solving the doubt that Apple may keep records of our audio. Apart from privacy, it will therefore be possible to interact faster with Siri because many requests will not need an internet connection: launch apps, change settings, control music, add a note, etc. The LiveText app will also use the “AI on-device” to recognize the text in a photo (and possibly translate it). For example, it will be possible to capture a telephone number from a sign with the camera and launch the call. Spotlight, another new app, will be able to search for people, scenes, and objects in our photos.

AWS Machine Learning Summit

The virtual keynote of the event organized by Amazon began by recalling how machine learning is now mainstream and how over 100,000 customers use AWS for machine learning (for example, the New York Times or pharmaceutical giants like Roche). Numerous success stories of the use of SageMaker were presented at the event (you can register here to access the video). SageMaker is the platform dedicated to developers and data scientists to prepare rapidly, create, train and deploy high-quality models. It is evolving along with three directions: ML infrastructure, tools, and industrialization.

We mentioned AWS Inferentia, the first custom silicon chip designed to accelerate deep learning workloads. The AWS Neuron Software Development Kit (SDK) consists of a compiler, a runtime, and profiling tools that help optimize workflows’ performance for AWS Inferentia. Developers can deploy complex neural network models built and trained on popular frameworks such as Tensorflow, PyTorch, and MXNet, and can also deploy them on AWS Inferentia-based Amazon EC2 Inf1 instances. By the end of the year, new chips, such as Trainium, will be available on Amazon EC2 instances.

Every person who works for Amazon must learn machine learning. A MOOC (massively open online course) was thus presented on Coursera, and it was entirely dedicated to deep learning called Pratical Data Science .

Conclusions

AI makes applications running on devices more impressive and powerful: photos, maps, searches, text comprehension, translations, etc. A few years ago it would have been impossible to perform all these calculations locally: today it is possible, thanks to the greater computing power, but it is even necessary to protect the users’ privacy.

Google and Apple blew us away in their demos, and we can’t wait to get access to these new products. On the other hand, Build showed how Microsoft is mainly interested in offering services on the Azure cloud to create enterprise AI and low code applications. There were, therefore, no sensational announcements that would attract the media’s attention; certainly, the number of companies that will exploit the new services will increase significantly in the coming years. Finally, as usual, Amazon highlighted its market-leading position as a cloud service provider thanks to products like SageMaker.

Companies presented training platforms, online courses, tutorials, workshops, and other tools designed to train users in all events. They highlight the need to democratize artificial intelligence and get out of the myth of the mysterious figure of the Data Scientist, which has been talked about a lot in recent years.

There is no revolution in sight, but the increasingly intensive exploitation of local computing power will allow us to add intelligence to our applications in both input capture and data analysis.

If you are curious to know how we will use the great potential of the announced tools, keep following us!